Bin-size vs. jitter - your application note

The deviation between successive clock periods—referred to as cycle-to-cycle jitter—is a major source of measurement uncertainty in time-domain measurements. In single-shot measurements, timing jitter directly contributes to the overall uncertainty and, depending on its magnitude relative to the TDC bin size, may dominate the measurement result.

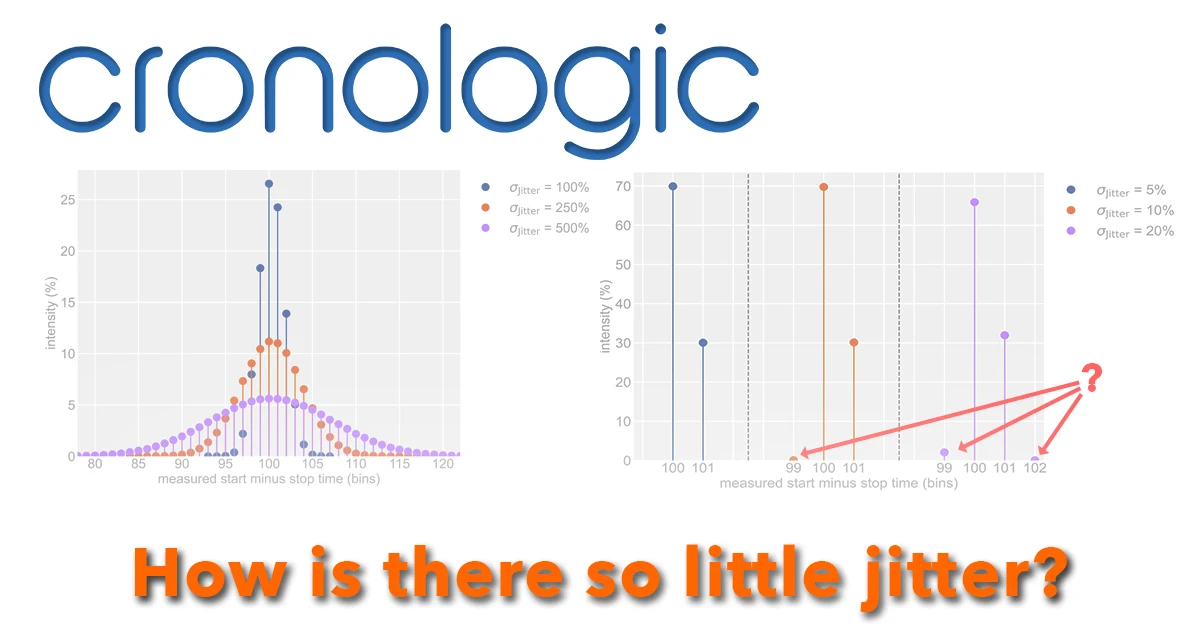

A common assumption is that this jitter leads to an FWHM timing distribution of approximately two bins. However, if you evaluate our TDCs using a simple cable-delay test, the result may be unexpected. These measurements show that, for our devices, timing jitter is simply not the limiting factor for measurement accuracy.

Our high-end TDCs, such as the xHPTDC8 and xTDC4, are implemented using dedicated ASICs that provide a highly controlled and uniform bin size. Consequently, the remaining jitter contributions are significantly smaller than the intrinsic quantization error of the TDC. For short time intervals, the measurement uncertainty is therefore predominantly determined by the bin size.

In our Application Note “Jitter vs. Bin Size”, we compare the results of cable-delay tests obtained with ideal TDC models, typical commercial instruments, and our own devices.

In this animation, an ideal TDC measurement is compared with a measurement that exhibits significant timing jitter. Compare the resulting histograms with those obtained from our devices (see Application Note).